3ds Max – V-Ray

Server rental

Unleash the power of v4 servers to turn your projects into reality – Speed, reliability, and performance at your fingertips!

> Learn More

OUR BENEFITS

Get the incredible power of our CPU and GPU servers to accelerate your renderings and achieve outstanding results in record time

Enjoy a smooth and intuitive user experience with our user-friendly render farm, offering the highest ease of use, even for novice users

Discover a professional rendering solution at an affordable price, offering great value for money for budget-conscious artists and studios

Our technical support service comes highly recommended by our customers, offering available and responsive assistance, ready to quickly solve your problems and answer all your questions.

Enjoy enhanced security with our farm, thanks to advanced confidentiality protocols (secure transfer, individual authentication per project, secure payment, etc.) and constant monitoring, thus protecting your confidential data.

Take advantage of a flexible, customised rendering solution to meet your specific needs, offering tailored options to perfectly fit your projects and creative requirements

CUSTOM RENDERING FARM

3D SOFTWARE

Would you like to rent your own machines?

« A Human RenderFarm ! You need help, there someone there ! »

Frederic TANTIN

14/12/2022

« Great renderfarm and customer service! Nothing more to say »

Manon Fournie

07/12/2022

« Excellent render farm and incredible technical support! »

Marco Padovani

17/01/2023

Why us?

We offer graphic designers powerful GPU & CPU computing servers, regardless of their industry and company size. With a global presence, we have developed our service to provide a comprehensive solution tailored to our customers’ needs.

supported plugins

Customised solutions

Powerful computing servers

3ds Max – V-Ray

3ds Max – V-Ray

3ds Max – Corona Renderer

3dsMax – Corona – i7 6850K- rendered in low priority.

3ds Max – FStormRenderer

Maxwell Renderer 4

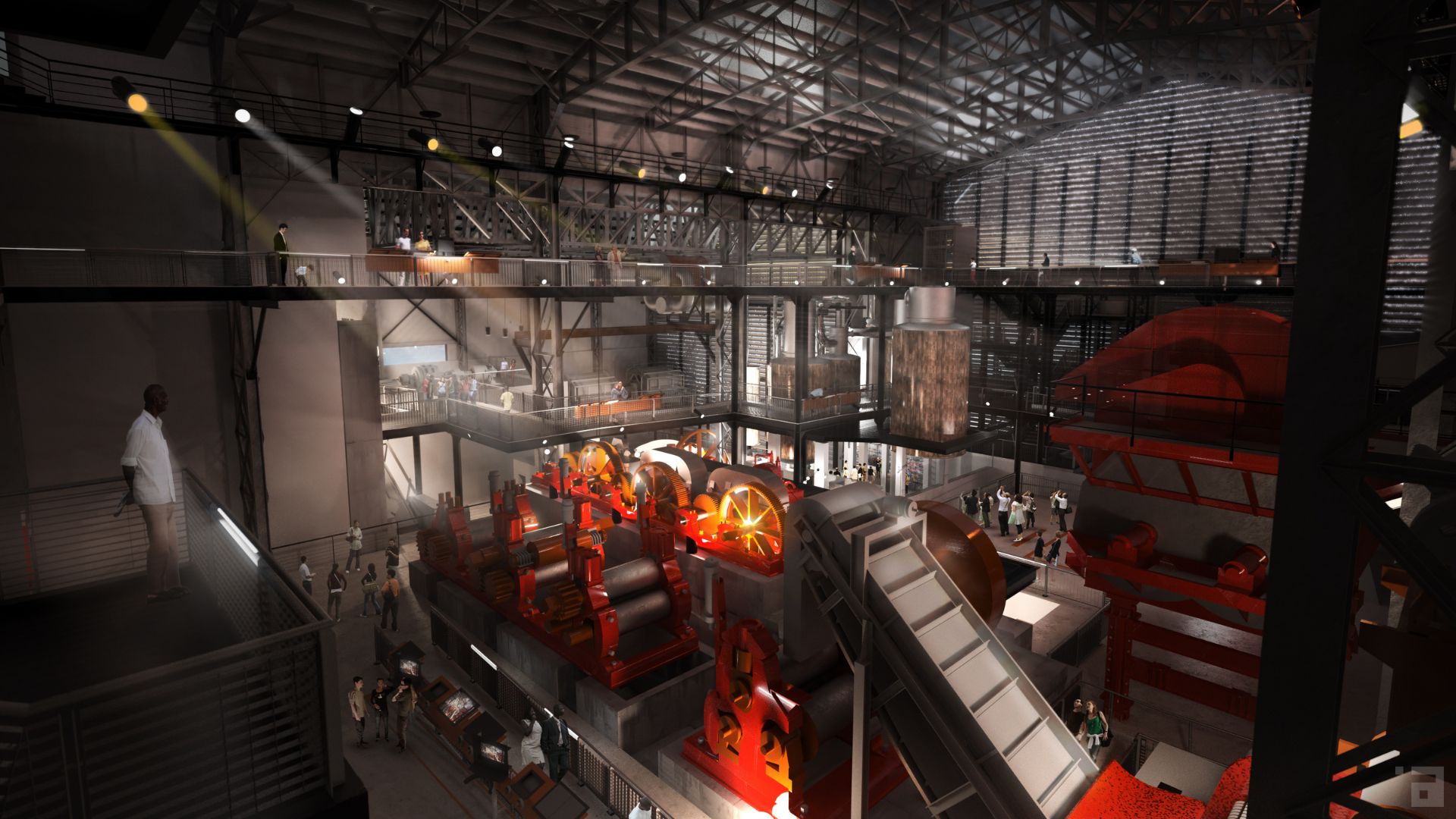

ILLUMINENS is a creative and communication studio dedicated to architecture. https://www.illuminens.com/

3ds Max – Corona Renderer

Rubis 3Design is a french design studio for interior and exterior spaces.

Maxwell Render 5

Cinema 4D – Arnold

“We created a 3D animation featuring the new Excalibur Spider Pirelli, Roger Dubuis’ first fully customizable watch.” Point Flottant

https://pointflottant.com/project/roger-dubuis-pirelli-one-click

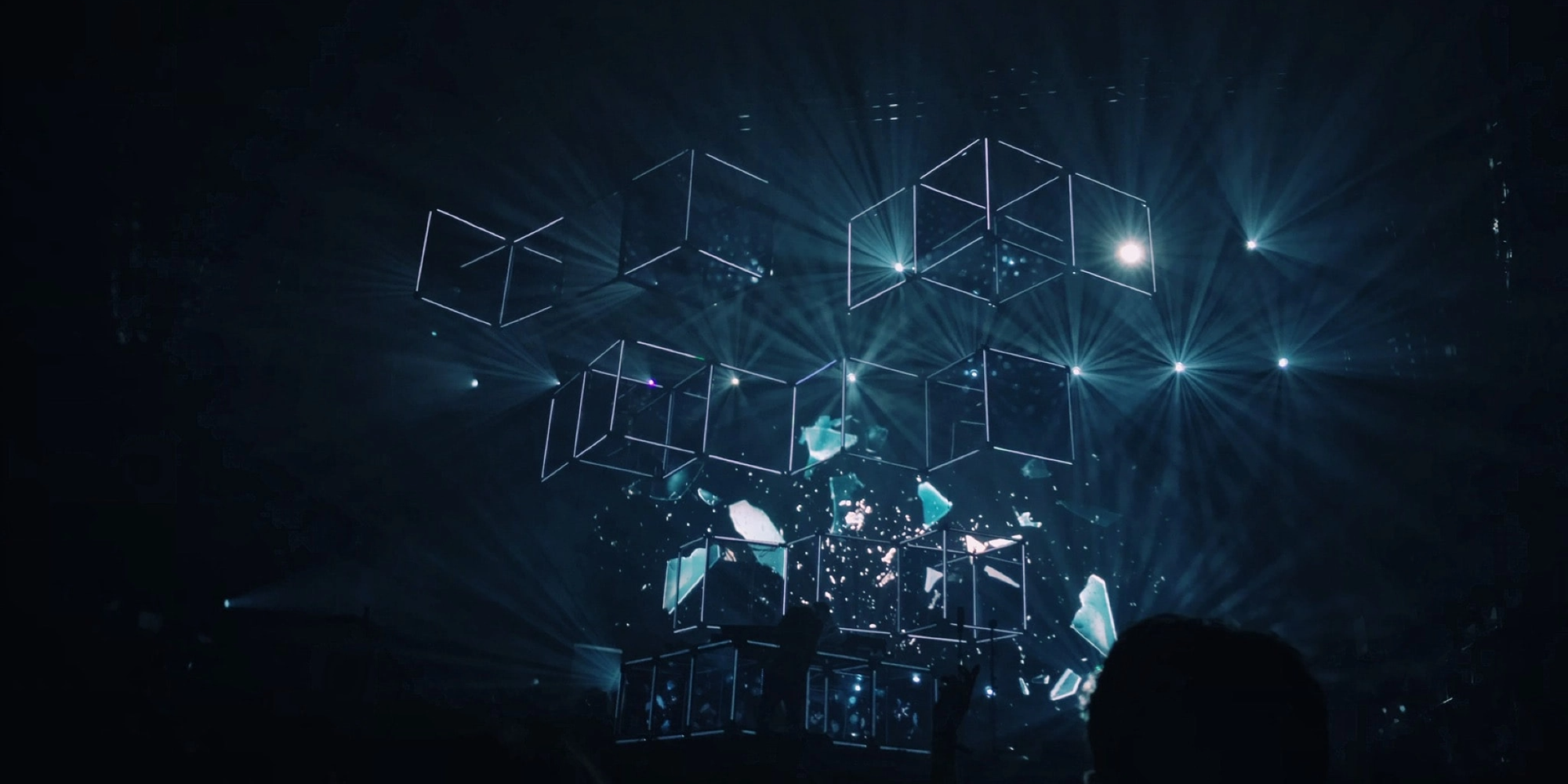

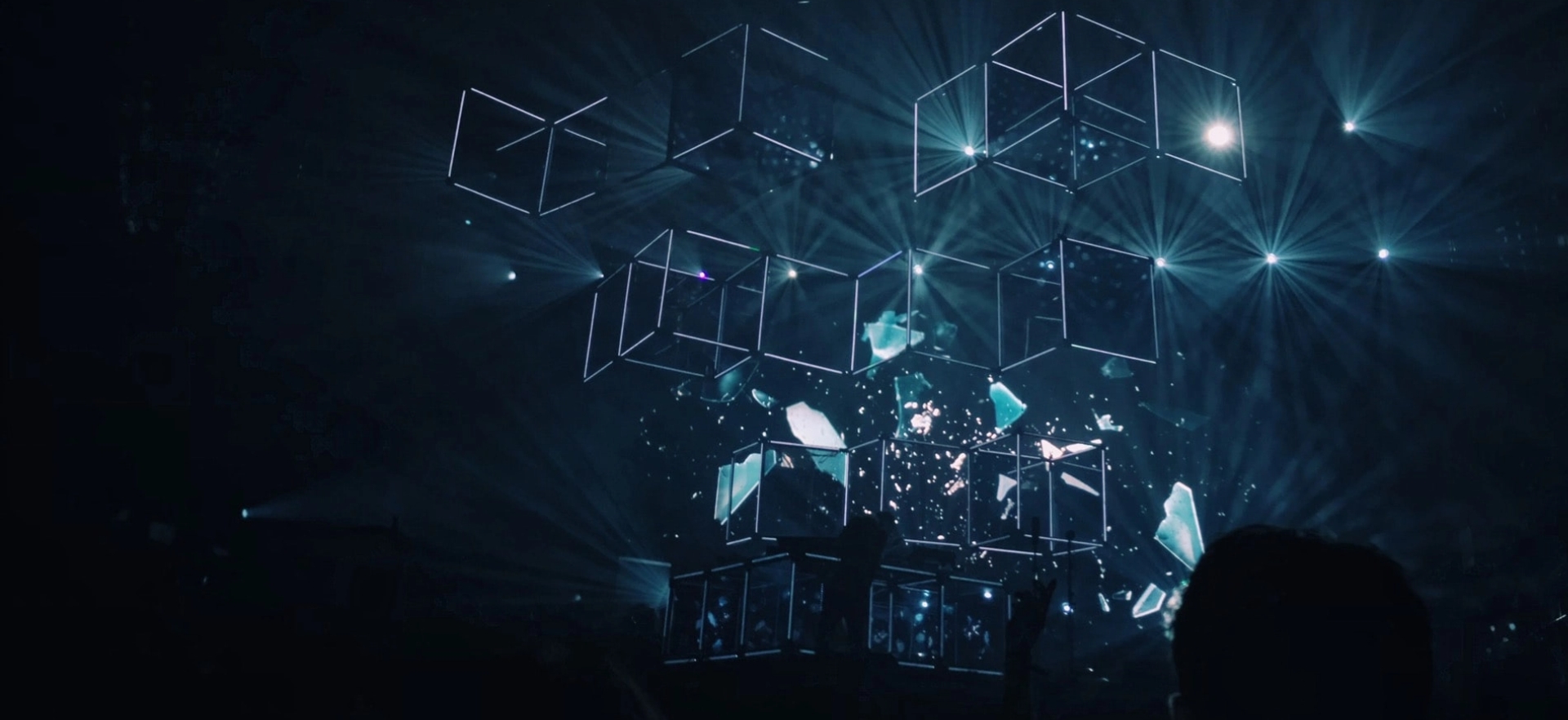

Cinema 4D – Redshift

Cinema 4D – Octane

Cinema 4D – Redshift

Samsung partnered with CLIM Studio to create the screensavers for the New Galaxy A Series.

Houdini – Redshift

Cinema 4D – Arnold

“We worked with Messika to help them create a magical 3D factory, celebrating the art of giving and showcasing their iconic jewellery for Christmas.” Floating Point

https://pointflottant.com/project/messika-art-of-gifting-christmas-2021

Cinema 4D – Arnold

Production: TAXFREEFILM

Concept: Franco Tassi, Giovanni Grauso, Antonio Vignali

Design & Art Direction: Giovanni Grauso

Direction: Giovanni Grauso

3D Artists: Giovanni Grauso, Andrea Bazzini, Davide Quagliarini, Francesco Tassi,

Nicolò Graiani

Poster and Print Post-Production: Maria Datei

Music: Harry Gregson-Williams

Sound Design: Smider

Maya 2023 – Renderman

Maya 2023 – Renderman

Maya 2023 – Redshift (895212)

Projet Maya 2023 + Redshift (895212)

3ds Max – V-Ray

A little animation whipped up for the Mississippi State fan site HailState+. Full video at https://hailstateplus.com/videos/leachs-speeches-happy-halloween

3ds Max – Corona Renderer

3ds Max + Corona Renderer. Intel Core i7 6700K, 4GHz

3ds Max – Corona Renderer

Images by QuadSpinner

Maya – V-Ray

Cinema 4D – V-Ray

Conceptual video created in collaboration with AF517Atelier(s) Alfonso Femia, presented at La Biennale di Venezia 2021 to provoke creative debate about life and architecture.

X-Particles Cinema 4D – Octane

Graphics/VFX: Dale Titterton

Cinema 4D – Redshift

“We worked together with BT Sport to put together a series of promos for the 2021 Moto GP. Turning the riders into the toy characters, we dived into the world of what is going on at the track when the day of racing comes to an end.”

All information on the latest innovations

offered by Ranch Computing